Episode 97: Porch Pirate Panic and the Paranoid Racism of Snitch Apps

Citations Needed | January 15, 2020 | Transcript

[Music]

Intro: This is Citations Needed with Nima Shirazi and Adam Johnson.

Nima Shirazi: Welcome to Citations Needed, a podcast on the media, power, PR, and the history of bullshit. I am Nima Shirazi.

Adam Johnson: I’m Adam Johnson.

Nima: Happy New Year, everybody. This is the first episode of 2020 for Citations Needed. Hope everyone who was able to take a break had a lovely break. We are now back for a new year of episodes. We are thrilled to have you all with us. Of course you can follow the show on Twitter @CitationsPod, Facebook Citations Needed, and please do support us through Patreon.com/CitationsNeededPodcast with Nima Shirazi and Adam Johnson. All your help through Patreon is so appreciated. We are 100 percent listener-funded. We would love to keep that going through this year and we cannot stress enough how our listeners enable us to do that.

Adam: Yeah, and of course you can always rate and subscribe to us on Apple Podcasts. We really appreciate that feedback.

Nima: Everywhere we turn, local media — whether TV, digital, radio — is constantly telling us about the scourge of crime lurking around every corner. This, of course, is not new. It’s been the basis of the local news business model since the 1970s.

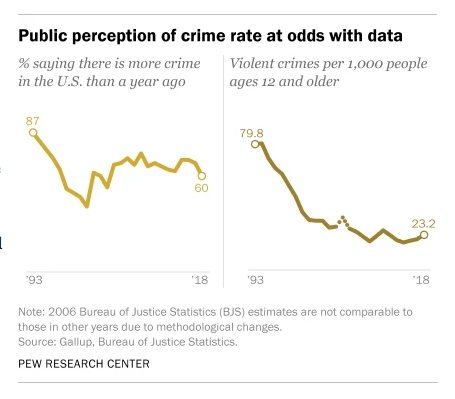

Adam: But what is new is the rise of surveillance and snitch apps like Amazon’s Ring doorbell systems and geo-local social media like Nextdoor, backed by real estate and other gentrifying interests working hand in glove with police, to provide a feedback loop of a distorted, hyped-up vision of crime. As we’ve noted before, despite crime rates’ falling nationally by 50 percent over the last 25 years, perception of crime is that it has increased almost every year Pew asks the question to Americans.

Nima:. One of the major factors fueling this misconception is the feedback loop where media — both traditional and social — provide the ideological content for the forces of gentrification. Police focus their “law enforcement” on gentrifying low-income areas, local news reports on scourges of crime based on police sources, then both pressure and reinforce over-policing of communities of color, namely those getting in the way of real-estate interest designs. All of this is animated by an increase in police-backed surveillance tech, just like Amazon’s Ring.

Adam: On today’s episode, we will break down these pro-carceral interests, how they create a self-reinforcing cycle of racist paranoia, and how local “crime” reporting plays a role in creating this wildly distorted perception of “crime.”

Nima: Later on the show, we will be joined by two guests. First, Sarah Lustbader, senior legal counsel at The Justice Collaborative and contributor to The Appeal magazine. She was previously Senior Program Associate at the Vera Institute of Justice and a criminal defense attorney at The Bronx Defenders.

[Begin Clip]

Sarah Lustbader: People now feel like their worst impulses are being not only validated but encofuraged. So if you are afraid by someone merely existing who has certain attributes that you have been taught to fear, maybe there’s a part of you that says, you know, ‘I really shouldn’t indulge this part of me.’ And then you have these apps that are sort of drawing it out and saying ‘No, no, no, you should.’

[End Clip]

Nima: We will also speak with Steven Renderos, co-director of MediaJustice, a national racial justice hub fighting for racial, economic and gender justice in the digital age.

[Begin Clip]

Steven Renderos: You know, there’s a lot of interests behind crime being a thing that is on the public consciousness, whether it’s for police budgets or to help sell the latest police tech or for real estate agents or for some other reason. Like, there are some moneyed interests in all of this that are definitely fueling the perception that crime is a thing.

[End Clip]

Adam: Before we go into this episode, I do want to say that this is a, for lack of a better term, a spiritual sequel to Episode 54, which was on how local crime reporting—the title was “Local ‘Crime’ Reporting as Police Stenography,” where we detailed the ways in which local crime reporting basically just sort of takes its cues from and, quite literally its script from, the police, and we wanted to sort of expand on that by incorporating these geo-local apps and snitch systems because we think they really add fuel to an already existing fire and have their own kind of media dynamic as well. So if you haven’t listened to that one, listen to it. If not, this should, standalone, make sense. But just to let you know, this is kind of a sequel to that episode from Episode 54.

Nima: So to start, here’s a little history about home security systems. The two biggest companies, Brinks and ADT—ADT stands for American District Telegraph—are the two oldest home security companies. Brinks was founded in 1859 and created a home security spinoff in the 1990s. ADT was founded, also in the 19th century, in 1874, in the New York area as a telegraph-based “call box” system that constituted the first residential security system network in the country. By the mid-20th century, ADT had developed burglar and fire-alarm technology. By the late ‘80s, it was manufacturing and distributing those now familiar lawn signs reading “Secured by ADT.” By the 1990s, both companies were running home security system TV commercials depicting white suburban residents and the criminals who sought to harm them and steal their shit.

Adam: Yeah, the invention of the home security camera is attributed to Marie van Brittan Brown, a nurse who in 1966 filed a patent for her, “home security system utilizing television surveillance.” Brown worked late hours and became frustrated that the police were too slow to respond to emergency calls. Brown, a black woman, lived in Jamaica, Queens, a neighborhood predominantly populated by people of color, particularly with the rise of white flight.

Nima: According to an article on Timeline.com, Brown built upon already existing closed-circuit TV technology, which was originally developed to observe Nazi rocket testing, but obviously, post-war, had very different intentions. Timeline.com says this, quote: “By 2013, more than a dozen inventors had cited the Brown patent for their own devices.”

Adam: Ironically enough, historian Sarah Igo, author of the book The Known Citizen, has argued that with the growth of suburbia following World War II, that white homeownership came to view the right to private property and private space as a form of freedom to counter quote-unquote “authoritarianism,” presumably communism. And that the home surveillance system was one way of protecting and sort of shoring up the importance of the white middle-class home.

Nima: So this really reinforces already ongoing segregation in residential neighborhoods and elsewhere. It determines who belongs where, often just based on people’s appearances and, you know, you can really see this freedom narrative work to solidify the idea that more surveillance equals more safety and security. It’s like the cop-ification of homeowners and the new digital neighborhood watch. This is of course built on the worst assumptions and snap judgments that people have based on their own internal, deeply ingrained biases. You know, we’re supposed to not ever trust our neighbors and people walking outside our front door, but who do we trust? Implicitly, we trust cops and now we also are made to trust Amazon and the tech companies that create these surveillance apps.

Adam: Right.

Nima: So one of these apps is Nextdoor, a self-described “private social network”, wherein users can theoretically report on the goings-on within their own neighborhoods to also advertise as a way for people to seek local help like last-minute babysitters or, you know, plumber recommendations, as well as a way for neighbors to, as they say “bond.”

Adam: But instead of the twee service it sort of promotes itself as, Nextdoor has, for whatever reason, become the unofficial neighborhood watch platform breeding misanthropy and racism. For the purposes of researching the show, I signed up to Nextdoor and I will say that it does have a sort of 50 percent ‘Have you seen this puppy?’ ‘Who here wants this old chair?’ And it’s all kind of wholesome, but then every other post is, ‘Has anyone seen this person?’ Obviously a lot of “porch pirate” panic. ‘Has anyone seen this’ — uh, you know, sort of vague descriptions of people — ‘There’s a guy who walks around with a green hoodie, do you know who this person is?’ Which we’ll get into later, obviously breeds very not good qualities in people.

Nima: So even when it started out Nextdoor was heralded as a potential quote, “crime fighting” end quote platform. A 2013 PandoDaily article proclaimed that, “Nextdoor’s unexpected killer use case” was this: “Crime and safety.” That was only three years after Nextdoor premiered. And it’s somewhat common knowledge now that Nextdoor really encourages fastidious, angry, very distrustful behavior in its users. Outlets like BuzzFeed and the Twitter account Best of Nextdoor publish frivolous Nextdoor posts. It’s actually quite a view inside what actually happens through this neighborhood watch app. And these posts range from complaints about, you know, unripe avocados at Whole Foods — shame, shame, shame — to direct pleas for people to uh, turn off their Christmas lights. But these sort of neighborhood complain-y, whiny things are only one part of these platforms. The stakes actually wind up being much higher than just that. There are copious examples of racist comments and racial profiling on Nextdoor and a number of news outlets have reported on just how much racial profiling really does occur on these types of apps when people report on crime in their neighborhoods.

Adam: And we’re going to give you just a quick rundown from a very early story. One of the first outlets that reported this—from Fusion in 2015—a writer by the name of Pendarvis Harshaw documented a lot of these cases. It told the story about two black men who were on their way to the party at the home of a friend in East Oakland’s Ivy Hill neighborhood, and they got lost. The person whose house they were going to, who’s white, noticed on Nextdoor the users were warning of quote, “scary sketchy” men who were quote, “lingering.” One was quote, “in a white hoodie” the other a quote, “thin, youngish African American guy wearing a black beanie,” — at least they got the nomenclature right I suppose — “white t-shirt with dark open button down shirt over it, dark pants, tans shoes, gold chain.” When her friends arrived, the white woman whose house it was realized the posts were about them, people who were just trying to get to her party. Now for the record, even if they weren’t trying to go to a white person’s party, that doesn’t make it okay, just to be clear.

Nima: (Chuckles) For the record, also that.

Adam: This just shows you how prevalent this is or, sort of, the ways in which people sort of talk about, ‘Oh, they don’t belong here.’ And when you live in a neighborhood that’s 98 percent white notions of who does and doesn’t belong are obviously inherently racist because what other proxy are you using? ‘Oh, we don’t normally have tall people in this neighborhood.’ Well, okay.

Nima: Well, right, because suspicion is then encoded into these apps but also into these neighborhoods now as, like, a default setting. So now, you know, it just determines who belongs, who doesn’t, based on, again, nothing other than visual appearance or the sense that someone peering out behind their living room curtains just has, and it grows this kind of sense of fear. It breeds this fear and distrust and creates really like a virtual gated community.

Adam: Yeah. And there was, you know, these reports started trickling out, of racial profiling. Twitter accounts like Best of Nextdoor, which doesn’t only do racism but does feature it, which sort of shows the worst of the Nextdoor is very popular. It has almost half a million views. It’s usually sort of really inane or kind of extremely busybody things. Now, of course, that can be harmless. It can oftentimes not be harmless. But one of the things that’s also happened is that police departments as one would expect, are increasingly using these apps as surveillance systems and quote-unquote “crime fighting” systems. A 2016 Atlantic article detailed how police, particularly the Seattle Police Department, were using Nextdoor for quote, “crime fighting” and PR purposes. The article reported that 1,400 public agencies, mostly police departments, were on Nextdoor. The article also noted that Nextdoor approached the Seattle PD in 2014 and the department quote, “saw an opportunity.” This is from the article:

The department waited until there was a critical mass of Seattle residents on the platform — about 20,000 — before diving in. “It’s not our place to promote platforms,” Whitcomb says. “It’s our place to go where people are having conversations.”

Adam: Whitcomb is Sean Whitcomb, the public affairs director of the Seattle PD. From the article:

Seattle’s department encourages precinct officers to maintain a presence on the community pages for the neighborhoods they serve. The initiative is an example of what the department calls “micro-community policing”: an attempt to use hyper-local data to customize its approach to law enforcement. Officers can alert residents to crime trends, ask for feedback on policing initiatives, or simply introduce themselves and encourage neighbors to say hi to patrolling officers.

Nima: Aw, that’s so nice. Let’s just to encourage them to say, hi. You’re in the panopticon but, you know, you can give cookies to your local crime fighter, who’s also you!

Adam: A lot of what we talk about on the show is the ways in which these systems, which are set up to be ostensibly value-neutral or race-blind, are garbage in, garbage out. Of course, if police are going on a app that’s run by a bunch of busybody white homeowners, their vision of crime is obviously going to be filtered through that lens.

Nima: Yeah. So in 2017 after some of this reporting on the ubiquitous racial profiling that occurred on the Nextdoor app, Nextdoor, you know, started saying that they were going to address the issue. They put up something that said “Racial profiling is expressly prohibited” on our platform. Unsurprisingly — shocking — these little tweaks did not really have much of an effect in reality. So the news site The Root surveyed people on Twitter in June 2019 about their experiences using Nextdoor and found that a number of black users or non-black users who had black family members, reported that police had been called on them. And there was a clear pattern of users using terms like “thug” and “terror gang” when describing young black people walking down the street or up staircases. One particular user described as a quote, “dark, suspicious man” end quote, on Nextdoor, told a story of moderators for Nextdoor using transphobic slurs. The user said that he was banned from Nextdoor after simply defending a transgender person who was being harassed through the platform.

Adam: Yeah. So there’s sort of more extreme versions of this. There was — and it’s still around — there was, in 2016, there was an app launched called Vigilante.

Nima: It’s a little on the nose there.

Adam: You can sort of see where this is going. It renamed itself Citizen after a backlash for—its obviously—they were basically going to, if someone reported a crime happening, what would happen is that they would ping the app and then the app would basically out an alert to people nearby to go, like, see if they’re okay. Which, if you know anything about the way crime works or the ways in which human psychology works, is a really bad idea.

Nima: Terrible, terrible idea. Grab your bats and chains, maybe guns and go to this thing that one person said is happening.

Adam: So, basically, you have an app that effectively conditions every white guy with a chip on his shoulder to become George Zimmerman. So, not a good idea. There was an ad that Vigilante ran in 2016. “This is a real app and these are real users.”

[Begin Clip]

Woman #1: 911. What is your emergency?

Woman #2: There’s this man following me. He’s wearing a hoodie. He’s really scary.

Adam: So here we have a woman who’s a nurse walking down-

Nima: To her car.

Adam: A dark parking garage. It’s a white guy in a hoodie, because you’ve got to do the Death Wish thing and make sure they’re white.

Nima: And now the cops, who are three miles away.

Adam: She’s called the cops. Vigilante is hip to what the cops are doing. They’re sending an alert. There is now a green arrow going to all these phones-

Nima: Bing bing bing bing bing!

Adam: Where they are told that there’s a, there’s a suspicious man following a woman at Park Avenue and Cumberland.

Nima: So now the bodega owner knows about it. Some families know about it.

Adam: They’re basically saying, ‘Go there and record,’ and so they, they’re showing a, a bunch of different people rushing to the scene. They’re running, they’re on their bike. It’s horrible.

Nima: Now she’s being chased.

Adam: Now they are taking videotapes of it. And now the cops are 1.3 miles away. She’s now to her car, the man attempts to what appears to rob her. He drops her on the floor. Now the guys have shown up to take on Ashton Kutcher who’s trying to kill her. It’s not clear.

Nima: And the, and the text on the screen says, “Can injustice survive transparency?”

Adam: “Now operating in New York City.”

[End Clip]

Adam: So obviously they sort of invert the racial dynamic because it makes them feel like they’re not obviously just being racist.

Nima: Right. So since you all were just hearing it and us describing it, just to make it perfectly clear, the neighbors that were then alerted by the Vigilante app were all people of color who raced to the aid of this nurse, also a person of color. Turns out the guy in the hoodie harassing her: white guy. But remember the cops were also being called, which means when the cops show upI know this is fantasy and this is an ad—but the reality of it is when the cops would show up, there’d be a woman in distress who had reported just that there was a man, no other description, following her. And then the cops would show up to all these, you know, black and brown men surrounding this woman and the cop would have no idea what was actually going on, obviously, which could really be a very dangerous scenario for the apparently do-gooder-y neighbors who rushed to this woman’s aid.

Adam: And of course the premise of it is bullshit, which is that it’s not going to be people calling the police about sketchy-looking white guys in hoodies. Like, I mean maybe every now and then, but mostly, let’s be honest, it’s going to be white people calling about black people, which is how this goes now. And this whole thing is based on the premise of this guy is wearing a hoodie, right? Which given that this is three years after Trayvon Martin, it seems a little bit charged. Now “hoodie” sort of becomes the, I guess, the official bad guy.

Nima: Yeah, exactly. That’s now the “thug” garb. Even though literally what the Trayvon Martin case should tell everyone is that it should not be that.

Adam: Yeah. It’s also just cold outside or it’s raining.

Nima: So shortly after the launch of the Vigilante app, the NYPD actually disavowed it because of a lot of these problems. And uh, the cop said this, quote, “Crimes in progress should be handled by the NYPD and not a vigilante with a cellphone.” End quote. Soon thereafter, Apple itself removed the Vigilante app from the Apple Store, making it much more difficult obviously for iPhone users to use it.

Adam: But Vigilante of course rebranded and relaunched in 2017 as Citizen, emphasizing the crime reporting function and deemphasizing vigilantism, though multiple reports indicate that the only real change was aesthetic. True to form, the relationship between the app and the police turned out to be not so adversarial. So according to The New York Times in March of 2017, a former NYPD spokesman who initially criticized the app started working as a spokesman for Citizen. So the police like these apps. What they don’t like is when they create a public relations problem by taking the mask off and going straight into Death Wish mode, right? Where you’re sort of promoting people going in and taking out the scum. Now there’s another element to this, which is surveillance that we are putting in people’s houses.

Nima: Like, literal cameras, not just self-reporting apps.

Adam: Right. And this is the Amazon Ring doorbell system fueled by something we’ll talk about a bit later, which is the great evergreen “porch pirate” story. So we want to start off by reading Caroline Haskins at VICE, who has done great reporting on this of late. I think she also works for or works at BuzzFeed, but she’s done a lot of the original reporting on this, so definitely check out her work at VICE and BuzzFeed. She wrote in an article detailing Amazon’s relationship with the police, quote: “Here’s what you’ll find at a Ring party.” These are parties that Ring throws for police all throughout the world, not just the United States. Quote:

Open bar. Free food. Live music. A “special recognition ceremony.” Free Ring doorbells. A live viewing of Shark Tank, the show that launched what would become Ring and to which company founder Jamie Siminoff eventually returned as a shark. And, most importantly, an appearance from retired basketball player Shaquille O’Neal. You could find all this at a private party that Ring hosted for police at the 2018 International Association of Chiefs of Police conference in Orlando. Ring threw a similar party on IACP weekend this year, this time in Chicago, including appearances by both Shaq and Siminoff, according to an event invitation obtained by Motherboard using a Freedom of Information request. The invitation notes that firearms are strictly prohibited. Ring — a company that has hosted at least one company party where employees wore ‘FUCK CRIME’ shirts and racist costumes of Native and indigenous Americans, according to new images reviewed by Motherboard — wants to brand itself a friend of police, the antidote to fear of crime, and a proponent of law and order.

Nima: So Ring, if y’all don’t know, is a WiFi-powered digital home security system. It was launched as DoorBot in 2013 but then relaunched as ring, as it’s now known, in 2014 and then subsequently acquired by none other than Amazon. So in addition to surveillance cameras, which focus on your porch or out your front door, in addition to surveillance cameras, Ring actually sells doorbells with surveillance cameras built in and alarms and other kinds of smart home security devices. And it’s not really hyperbolic to say that ring plays an increasingly instrumental — not to mention very lucrative — role in upholding the capitalist police state. Investigative reporting at The Intercept, as Adam just said, VICE’s Motherboard tech vertical and elsewhere has shown that Ring is extremely closely aligned and allied with police departments around the country.

Adam: So the way it generally works—Ring has, both before and after its acquisition by Amazon, Ring has always worked to pursue police departments as partners. So what they do is they, just to be clear how this works, Amazon gives Ring to police departments for a highly subsidized or even free, and then they use police to give it away to people. So police will actually go door to door and say, would you like a Ring?

Nima: Yeah, they’re like door-to-door salesmen for Amazon products.

Adam: And obviously people are maybe somewhat dubious about putting a camera in their house because when a police officer is over, they say, ‘Oh, it’s not controlled by us. It’s controlled by Amazon.’ Of course, the police have real-time access to Amazon’s tech-

Nima: As if that’s also somehow fine.

Adam: Well, right. Well, people trust corporations more, right?

Nima: Exactly.

Adam: If the U.S. State Department was, like, ‘Here’s the social media network, would you like to join up?’ People would say, ‘Oh no, it’s the government.’ But if it’s, if it’s first funder Peter Thiel, who just co-founded a company with the CIA, Palantir, shows up in 2004 and it’s, like, ‘Oh well here’s the scrappy guys from Harvard and here’s this movie talking about how scrappy they are. Oh, dude, you want to sign for this social network that’s based in Silicon Valley and that works hand in glove with the national security state?’ ‘Oh yeah, that sounds good. Just some scrappy Harvard guys. I saw that movie with Jesse Eisenberg.’ Anyway, Amazon has a partnership with the police in over 700 jurisdictions now and there’s actually a map, after enough public pressure, they released a map that tells you specifically who these police departments are. And one of the things that fuels this, I would say the primary thing that’s fueled this, because of the sort of value add, as it were, for the consumer, the “homeowner,” the home renter whoever, has been the idea that this can help you combat “porch pirates.” Now, from Amazon’s perspective, there’s obviously a dual function here. It builds up their surveillance portfolio, which is a huge part of their growth model, but it also helps reduce shrinkage. Having a camera on porches and having the police operate as, effectively, private security helps reduce theft, which of course is central to Amazon’s growth from getting packages from their store to your home. Now, one of the ways they’ve done this is by fueling a “porch pirates” panic, which is increasingly out of control. So I’m sure you’ve seen these stories about “porch pirates” and police clamping down on “porch pirates.” What they don’t tell you is that many of the police that are feeding these stories to local media that uncritically report them, have relationships with Amazon Ring. In fact, I think at this point, most do. So there’s Kansas City PD—this is just from the last couple-of-week period leading up to Christmas 2019: “As porch pirates evolve, police offer tips to protect your packages.” That was a story fed by Kansas City Police Department that has a relationship with Ring. This is from the San Francisco local CVS “Porch Pirates Target Milpitas Neighborhood.” That was the primary driver of that story based on quotes is the Milpitas Police Department. Oklahoma City Police Department, this is KOCO, “Porch pirates getting more aggressive,” says KOCO Oklahoma. The primary source for that of course is the Oklahoma Police Department. Prince George County Police Department is the primary driver of a local WJLA story which is entitled “Porch pirates? Police seek help finding 3 suspects.” So these police departments have arrangements with Amazon. They then go to local media and say, ‘Guys, we have this really important police porch pirate story.’ So this year they had to sort of raise the stakes because you can’t just keep doing the same “porch pirate” story. Everyone sort of knows it now. And so like Jumanji 2 they have to kind of mix it up for the holiday season. They’ve noted how they’ve quote, how porch parts have quote, “gotten more aggressive.” They quote, “they’re getting more creative,” unquote, and are quote, “starting early this year.” So you sort of, to increase the panic in people’s souls, you have to say they’re getting more aggressive.

Nima: Anytime you order anything. I mean, something amazing about this is of course how nakedly feedback-loopy it winds up being. Right? So with online sales increasing that there’s—fewer and fewer people are going to actual like brick and mortar stores, you just order everything on Amazon—so Amazon, you know, is this mega mega company. So then when you have an increase of deliveries to back doors, front doors, porches, you then get to spin that as welcoming even more aggressive crime in terms of “porch pirate” theft of your packages. And so how can you better protect your own deliveries, which you’ve purchased through Amazon, because almost all of these articles then show Amazon packages on front steps and porches. How do you protect that? Not only the cops, but how do the cops know what’s going on? With an Amazon product called Ring.

Adam: Right. It’s a feedback loop.

Nima: And so you just need to buy more Amazon to surveil your Amazon packages being delivered.

Adam: Yeah, I heard you like some Amazon, so I put some Amazon so you can Amazon when you Amazon. Then of course the whole source about “porch pirates” quote, “getting more aggressive” and quote, “getting more creative” and quote, “starting earlier” unquote, all those sources, if you read these articles, are the police. The police are just saying it. There’s no, like, the reporter isn’t sussing through data and like not running the numbers and saying, ‘Oh wow, porch pirate incidences are up this year.’ They’re not doing studies with the local state university to find out if they are quote, “starting earlier this year.” This is all just bullshit marketing from the police because the police want incentive to scare people into getting these Ring surveillance systems because, you know, the sort of benign reading is that it makes their job easier. It makes their ability to catch “bad guys” or whatever easier. The more sinister reading is that police in general like surveillance networks, they want these camera systems because the police by default want more sophisticated surveillance systems because that’s what police want. Now we hear a lot about the rise of surveillance in China and the use of CCTV cameras in China. And that of course is a big problem there. But a recent study from early December of 2019, I’m reading a headline from a magazine called Inverse, it says quote, “The U.S. has more surveillance cameras per person than China, new study shows.” “The United States has 15.28 CCTV cameras for every 100 individuals, followed by China with 14.36.” So the U.S. is a very surveilled place. We are more surveilled than China, despite all the sort of scary headlines about Chinese surveillance. And one of the reasons is because of the ubiquity. The privatization is a way to sort of fuel it and make it more ubiquitous because people feel like it’s a choice they’ve had.

Nima: Yeah, no, exactly. That, like, you are choosing to protect your own property, your own neighborhood, your own family, and that the way that your choice manifests is not only by buying more products, but then being a partner with police to further that protection. So because it seems self-driven, it doesn’t seem as Big Brother-y even though the effect is identical. So in July of 2019, Motherboard, as we mentioned earlier, Caroline Haskins, reported that Amazon was also enlisting police departments themselves to advertise Ring products in exchange for more free products for residents and that they would get access to the “portal,” which keeps everyone connected. So from the article, there’s this, quote:

In order to partner with Ring, police departments must also assign officers to Ring-specific roles that include a press coordinator, a social media manager, and a community relations coordinator.

Nima: End quote. On top of this, police departments get credits toward free Ring products for residents by directly promoting the Neighbors app. So the Neighbors app is like the app partner to the Ring surveillance cameras. So Amazon actually purchased Neighbors to the tune of $1 billion. So now Amazon owns Neighbors, which is the app and Ring of course, which is the surveillance system, and they work together. The Ring surveillance system then reports things through the Neighbors app. So this is really the foundation of a mutually beneficial and, quite frankly, poisonous relationship between a private company and law enforcement. One more quote from the article is this, quote:

The result of Ring-police partnerships is a self-perpetuating surveillance network: More people download Neighbors, more people get Ring, surveillance footage proliferates, and police can request whatever they want.

Adam: Yeah. And so here’s the kicker card that should make this all fine, which is that in April of 2019, Harvard’s journalism news site, Nieman Lab, reported that Amazon Ring had plans to produce quote-unquote “crime news.” The article, uh, noted that Amazon was hiring a managing editor to work for Ring on a quote “breaking crime news show.” The job listing has since been deleted, presumably because Amazon got wind of the story and it went viral on Twitter. From the article, quote:

The job requires at least five years’ experience “in breaking news, crime reporting, and/or editorial operations” and three years in management. Preferred traits include “deep and nuanced knowledge of American crime trends,” “strong news judgment that allows for quick decisions in a breaking news environment.”

Adam: So it appears that at the very least there was early discussion, there was some kind of discussion about turning the Amazon Ring cameras’ network into a television show, a crime prevention television show. We’ll see if that materializes over the coming months and years, but it does show the synergistic relationship between surveillance and the promotion of crime as a form of drama as a marketing tool to get people to want to participate in their own surveillance or in the surveillance of people poorer and of darker skin than they are. On its website, Ring has a section called Ring TV, which displays a whole catalog of Ring video camera footage. Topics include Crime Prevention, Caught In the Act, Package Protection, Suspicious Activity, Safety & Monitoring, and other categories include Family &Pet Safety and Animals & Wildlife. The captions of the videos look like they’ve been written by police departments: dimwitted attempts that snark about criminals, cute local news stories. We’ll read you some of the headlines here. Quote, “Lights, Camera, Action: Watch this guy turn away as soon as the floodlights came on.” Quote, “Oh! They Got A Camera: An attempted break-in ended abruptly when a burglar caught sight of Ring.” So they sort of promote the kind of “dumb criminal,” like, ‘We got ‘em!’ Very dehumanizing language. Of course no sort of nuanced meditation on the conditions of the people robbing the porch. You know, these are sort of, these are just the “bad guys,” and no sense of how poverty or rising home prices or, you know, desperation to sort of keep up with the Joneses fuels this kind of stuff.

Nima: Well, of course. And so there’s also, you know, built into these apps and these surveillance systems and networks is the idea that crime always equals petty crime, right? That it’s, you know, theft or it’s suspicious activity, burglary and not that those aren’t real things. Those are real things. But that is deemed, and we’ve talked about this before on the show, especially with local crime quote-unquote “crime reporting,” but that winds up being more often the notion of what is dangerous, what is lurking outside the door, the most immediate, proximate type of crime to individuals and to families and to, of course, homeowners and property, but always misses — deliberately so — systemic issues that are far larger than someone stealing a package off a porch, which are, say, mass incarceration, police harassment and abuse, poverty. All of these things that create the environment where these things can take place, those things are never addressed. Those things are not going to be surveilled. Those things are not going to be dealt with through an app. You’re not going to report on your boss’s wage theft. You’re not going to report on, you know, the Chinese food delivery guy’s lack of a fair wage when they deliver your food. Uh, no, you’re going to worry that that person showing up on their bike might take the package that’s sitting outside your door. And so there’s a total kind of power dynamic at play with these surveillance apps as well.

Adam: Yeah, I mean our whole perception of crime is based on a very right-wing, limited version of crime. It’s sort of we, you know, I feel like we beat this horse to death and I will continue to do it. It’s the same thing with how our notions of corruption are limited and how our notions of democracy are limited. It’s a very sort of finite definition of crime that how it ends up sorting out ends up being the poor and dispossessed and the sort of morally superior homeowner who’s protecting their castle becomes—generational theft is not, the sort of pushing out people who can’t afford homes. Homelessness is not a crime itself. It’s the way you respond to it. So all of our sort of bleeding-heart, bitching and moaning aside, these things are by the very nature, by the very, the way they’re set up are designed to promote the interest of real estate and those who own real estate. The entire way in which the media reports crime as we’ve talked about before, is entirely based on centering homeowners and real-estate interests and white people.

Nima: And you can really see this play out in terms of who often invests in these surveillance systems and these snitch apps. So for instance, one of Nextdoor’s funders is Rich Barton, co-founder of Zillow, the major multibillion-dollar online real-estate listing company. He invested early in the Nextdoor app. Another Nextdoor investor, Benchmark Capital, also has substantial investments in Zillow itself. And so in 2017 Nextdoor introduced a new app feature that allowed real estate agents to advertise on their app. So real-estate companies and agents can also purchase their own branded listings to advertise based on a Nextdoor user’s location. So it is combining the neighborhood watch and the snitch surveillance platforms with more real-estate sales and real-estate interests.

Adam: Yeah. I mean Citizen itself, its primary early investor was Peter Thiel, who invested in, of course, he was the first investor of Facebook, another popular surveillance app you may have heard of. He also was a founder of Palantir, a CIA-backed big-data surveillance company that has contracts with the CIA, FBI, NSA, ICE, the Pentagon, law enforcement agencies including Chicago PD, LAPD. Slow Ventures was also an early investor in Citizen. They also invested in Cadre, which is a real-estate investment company. RRE Ventures has invested in HireHaven, which maintained a contractor database for construction. In a 2019 op-ed for the San Diego Tribune, Mark Powell, a member of the board of directors of the San Diego Association of Realtors, which is of course a front for the real-estate development industry, wrote an op-ed that was entitled quote, “Why it’s time for California to address porch pirates,” where they pushed for a law to increase the punishment for people who steal from porches. You see this symbiotic relationship between real-estate interests, hyper-criminalization of quote-unquote “porch piracy” and the snitch apps, which create this constant sort of feedback loop of panic and fear that is completely divorced from the objective reality of crime, which is that crime has gone down, including property crime.

Nima: And so this has everything to do with gentrification. It has everything to do with a new digital gated community, everything to do with the new type of redlining segregation that now the kind of inverse of white flight, either low-income or communities of color are now seeing increased levels of these so-called “quality of life” complaints to the cops. For example, in a 2019 report entitled “New Neighbors and the Over Policing of Communities of Color” produced by Community Service Society in New York, CCSNY, examined Manhattan and Brooklyn gentrifiers’ “quality of life” calls to the police by studying the data gleaned from the 311, it’s like the, you know, local, you can call the city to make complaints using 311. The calls consisted mostly of complaints about noise and the use of public space. The effect of these calls, of course, is the increased harassment of existing residents in these low income neighborhoods. From their report there’s this quote:

Citywide, all quality-of-life complaints referred to the NYPD increased by more than 166 percent from 2011 to 2016. But the increase was significantly higher in those lower-income, majority person-of-color tracts with large influxes of white residents than those without large influxes of white residents.

Nima: Even, you know, when you think about Eric Garner being killed by the NYPD, that occurred in a neighborhood on Staten Island near a new economic development with increasing complaints for low-level offenses. So you can see the real dangerous, sometimes lethal consequences of merely increasing “quality of life” calls to the cops.

Adam: So then the question becomes, does Ring actually work? And the evidence says it doesn’t really. When Amazon announced that it was acquiring Ring, the company claimed that a pilot program between Ring and the LAPD had quote, “reduced crime by as much as 55%.” But this statistic was based solely on data gathered by Ring itself. There was an MIT Technology Review which inquired about the methodology and neither Ring nor the LAPD would answer their questions. This review went on to compare publicly available L.A. crime figures and found that the places where Ring products were used actually saw an increase in burglaries during the course of the pilot program. This does not necessarily of course mean that Ring causes burglaries and other property crimes, but it shows that it certainly didn’t reduce them. And MIT Technology Review also discussed a study carried out independently of Ring that found the neighborhoods with Ring doorbells were less likely to have break-ins than those with them. The city of West Valley City, Utah tested two neighborhoods with comparable crime rates. One was given Ring products, one wasn’t. The neighborhood without Ring products saw a 50 percent drop in burglaries while the one with Ring products saw a 41 percent drop in burglaries. Don Chon, an associate professor at Auburn University in Montgomery, Alabama told the New York Times that, quote, “he has not found any evidence in his research that security measures like alarms, special locks, high fences or watch dogs reduce the burglary risk.” So there’s this other part of, like, they don’t even really work.

Nima: The apps claim that they work, but there’s no evidence to say that they work.

Adam: But even if they did, right? ‘Cause I think that’s a little bit separate. But I think what’s more interesting to me is not, do they not work, although I think the evidence shows they don’t really, it just speaks to the psychological nature of this whole thing. They’ll tell you this, and it’s like in all their internal marketing materials for Ring and ADT, that they sell peace of mind. They make you feel good.

Nima: Right. Make you feel safe. But the way they make you feel safe is with their products, which means without their products, more fear, more peer pressure to have more security. And the way that you do that is by buying more products sold by Amazon.

Adam: But the problem is, is that to sell that peace of mind, they have to also sell fear. They’re not just selling, they’re not responding to a necessarily organic thing in the market. Now, maybe in the ‘60s and ‘70s when the murder rates were high, you can argue, ‘Okay, maybe they emerged from some kind of organic crime wave,’ but without really the crime wave there, they’re using the “porch pirate” narrative and other narratives to sort of create a panic and to create a fear that maybe otherwise wasn’t necessarily there. And that’s where you have your problem.

Nima: Right. You just inject the virus and then say, ‘Look, I have the antidote and it’ll cost this much money.’

Adam: And that’s where local media comes in. Local media comes in because local media runs the same goddamn “porch pirate” story every five seconds, and your sort of paranoid person watching this thinks that there’s all of these hooded black men outside their window lurking to grab the beard trimmer they bought their nephew for Christmas, and it’s not good.

Nima: Well and there’s the added element of, it just ensures more racial profiling because it’s not merely about protecting product, it’s also about the fear of belonging and appearance in a neighborhood and you can see this manifest in, like, delivery men for UPS or for FedEx are having the cops called on them, are being surveilled themselves, as not being trusted. So even the people whose job it is to bring you your Amazon products to your door are now seen as being potential committers of crime. Right? That they are the villains as opposed to part of the network which is going to bring you more surveillance.

Adam: Right.

Nima: To talk more about this, we’re going to be joined by Sarah Lustbader, senior legal counsel at The Justice Collaborative and contributor to The Appeal magazine. She was previously Senior Program Associate at the Vera Institute of Justice and a criminal defense attorney at The Bronx Defenders. Sarah will join us in just a moment. Stay with us.

[Music]

Nima: We are joined now by Sarah Lustbader. Sarah, thank you so much for joining us today on Citations Needed.

Sarah Lustbader: Happy to be here.

Adam: So, we’ve been talking a while about the optic and media implications of apps like Citizen, Nextdoor and now Neighbors, the sort of Nextdoor spinoff. These kinds of surveillance systems, many of which involve relationships with the police and monitoring surveillance equipment, obviously cameras, microphones, etcetera, there’s this kind of lofty vision about what these crime-prevention geo-local apps can do, but mainly because they’re self-reporting and police reporting, they rely on a kind of ad hoc, racist, and unscientific system. What are these systems sort of alleged to do on paper in some meeting in Silicon Valley versus how have they actually played out, and what is the reality of their manifest effect?

Sarah Lustbader: Yeah, I mean, I kind of think about it as a sort of outgrowth of the idea of crowdsourcing. And so you think, like, when you’re choosing what restaurant to go to or what sight to see in a new city, you think, ‘Oh, I’ll just trust the wisdom of crowds, surely they know best.’ And you know the worst that happens is like your pasta is not that good or whatever. But, best-case scenario, you find the best thing or the best product or something because the crowd sort of agrees with you. But it sort of brings that mentality to crime fighting, which is a terrible idea as far as I’m concerned when you think about the history of vigilante justice and lynch mobs going with the crowd, not the heroic Twelve Angry Men sort of justice that we have.

Adam: The original failure of crowdsource app-ing.

Sarah Lustbader: Exactly right. Like, you don’t really want to be with that crowd. In fact, you might not want to. And so I think that’s the promise. Like, if you go to their websites, it’s this optimistic community-based tone with this undercurrent or kind of over-current of fear. So, you know, if you look at Citizen, I think the website says, “Our mission is to keep people safe and informed. We believe everyone has the right to know what’s happening inside their communities in real time.” So they give you these real-time 911 updates, but they also update you with things that your neighbors are saying. So it’s not just 911 or even police blotter stuff, which, like, I don’t know why you would want to know that stuff in real time in the first place. It seems stressful. But it’s also stuff that your neighbors think is suspicious, and so it actually ends up magnifying and perpetuating all sorts of discriminatory and ugly predilections of people when it comes to guarding their own homes or, or protecting their children. Really sort of, like, ‘We’re helping each other. We’re a community.’

Adam: Yeah. There was—because in the original advertisement for the Citizen, which we listened to earlier, there’s a woman who’s underneath the passageway. And they tried to make everything race neutral. And she’s like, ‘There’s a man in a hoodie following me.’ Which is interesting because this ad was aired about three years after George Zimmerman, and you would think that maybe “guy in hoodie” is not really the best criteria for sounding a multi-faceted alarm system. So like the inputs are just, like, ‘This person looks weird’ or, like ‘This person scares me,’ which obviously is a solipsistic criteria that has with it all kinds of baggage. Mostly racist baggage.

Sarah Lustbader: Yeah, and if you look it up, I encourage everyone to go look this up. It’s a pretty unbelievable couple of minutes on YouTube, but the guy who is suspected merely because he is in a hoodie, then of course turns out to be exactly the guy lurking under the bridge that, that every, you know, damsel in distress is-

Adam: It’s Stranger Danger, yeah.

Sarah Lustbader: Exactly. So it just really hammers home all of these things that we’ve been taught to fear mostly wrongly. And actually crime is falling in the U.S. If you look up statistics, you will see that most places are safer and safer and in fact there are historic lows of crime, especially violent crime. And one of the effects of these apps is to put suspicion and fear at the forefront of people’s minds and allow them to give you these alerts on your phone so that you have this wrong perception that ‘Crime is everywhere, and in fact it’s on the rise.’ And that’s a problem for a lot of reasons other than just, like, people being scared. It’s a problem because it means people are more likely to elect tough “law-and-order” candidates when it comes to DA or you know, mayor or sheriff and it can actually impede reforms. And it can also just, you know, like, stir up racism.

Adam: Yeah, I mean it’s a conditioning mechanism as well. And that may be one of the political, it’s sort of just after 9/11 the kind of constant terror alerts and color alerts and the duct tape gets-

Nima: And budgets rise and there’s outcry.

Adam: Yeah. It’s just, like, there’s this, this constant anxiety. It’s sort of like the, it’s like watching 24, you’re just constantly, there’s a ticking time bomb at all times.

Sarah Lustbader: Right. And so when something’s on a ballot, a referendum for legalizing marijuana or giving the vote to people who are on parole or probation, which New Jersey just did, what you’ll probably find, I assume, if people are sort of, as you say, conditioned to be so fearful, to see people in hoodies as The Other, they’re going to vote against that sort of thing, are going to vote for candidates who are going to oppose those things. And so you’ll see a lot more sort of stinginess, you know, when it comes to like education and prison programs, or things that are just, like, objectively good will be a harder sell.

Nima: A lot of these systems, whether it’s Citizen or Ring, a lot of them have really dubious histories of venture capital, or being on Shark Tank and then getting rejected and then having a new life in another way. But there’s another one, Sarah, that you’ve written about called SketchFactor, that takes this approach maybe to its most logical ends. Can you tell us about the journey of SketchFactor and how it may be different or maybe not so different from the current crop of crowdsourced snitching surveillance apps?

Sarah Lustbader: I have to admit that I learned about SketchFactor in 2015 not because I downloaded it, but because-

Nima: Full disclosure.

Sarah Lustbader: Yeah, (laughs). Full disclosure, I did not download it. No, my, my husband’s a writer and he wrote about SketchFactor for the New Yorker and I think the article was called something like, “When an App Is Called Racist” and it’s about this, it’s not exactly the, like, tell us your, I don’t know, like, the sketchy things that happened in front of your door. What it was is in some ways worse, is basically founded by, like, two white people in their twenties who like moved to New York from elsewhere I think. And, I don’t know, maybe they felt scared walking around. I think that one of them lived in the West Village so I’m not really sure why she felt scared but, crowdsourced sketchiness. And so what it was was, like, any city you live in, there’ll be a map and overlaid on that map you could put in a tag, like a geo-located tag for, ‘Okay, on the corner of Fancy Street and Super Wealthy Way in the West Village, something sketchy happened.’ And then somehow that would, like, contribute to the public’s understanding of how sketchy a neighborhood was. I mean, the whole idea was ridiculous, and I think that the news coverage was pretty quick to say, ‘Oh, this is very racist. This is, like, a real problem.’

Adam: Well, yeah, ‘cause their sin was to sort of be overt about it. And I think that there’s this weird interplay you see with the other apps where the ad for Vigilante, which later became known as Citizen, was really just sort of cartoonishly fascist. And I think some of the ones like Nextdoor and Amazon Ring, they’ve, like you said, they’ve tried to, they’ve realized the public relations liability and so they’ve taken this very lofty tone. But then there’s this very kind of wink and a nod to, ‘Yeah, you’re still going to use this to monitor your neighborhood.’

Nima: Well. Right. And to, like, create basically, like, a maze of being able to walk around so that you avoid black neighborhoods or streets that are deemed sketchier than, you know, the most wealthy place. So, like, yeah, I mean the West Village example I think is amazing, Sarah. Not that everyone who listens to the show knows about Manhattan, but the West Village is definitely a wealthy neighborhood. And so the fact that people living there or moving there with enough money to be able to move to that neighborhood would still design a service that allows you to still be the most segregated version of yourself is in itself pretty remarkable.

Sarah Lustbader: Yeah. They weren’t, like, that subtle about it. I think that the apps icon was a black bubble with googly eyes apparently. And so like Gawker, their headline was “Smiling Young White People Make App for Avoiding Black Neighborhoods.” You know her quote in the, in the New Yorker piece, one of the co-founders, she basically said, ‘Well, you know, we didn’t know, we didn’t think that we’d be called racist. We don’t think we’re racist. And we basically thought that this app would solve its own problems.’ Like, people would just sort of downvote problematic posts. I just don’t know if she’d ever seen the Internet before, because the idea that users would just solve racism if given enough opportunity to communicate.

Adam: Yeah. It’s weird, but my new pitchforks and blames app didn’t have the nuance I thought it would.

Nima: Everything is going to get better when people freak out together.

Adam: One of the elements I’m super fascinated with is the concept of centering people’s subjective perceptions as the main unit of information. So there was an article in the Chicago Sun-Times last May talking about “flooding the zone,” which is sort of basically code for cops going downtown and making sure that not too many black people are going to Magnificent Mile where tourists and rich white people are, and I’m going to read a section of that. They said, quote:

Chicago police flooded downtown during summer months, paying particular attention to CTA stations where large groups of young people arrived to congregate in the Michigan Avenue and State Street shopping districts.

If police saw large groups of young people intimidating shoppers or otherwise causing trouble, they would surround those groups, follow them and sometimes steer them back to CTA stations.

Adam: This idea of intimidating shoppers is fascinating to me because they’ve committed no crime, and the extent to which people are intimidated it’s purely-

Nima: It’s a crime against consumerism, Adam.

Adam: Well, also, I mean, even setting aside the centering of shoppers as sort of the moral unit we should be concerned with, the idea that, like, intimidating someone is sort of itself a crime is so fascinating to me because it really does get to the core of what really police do. This says the quiet part out loud, which is ameliorating or making the wealthy or the largely white population sort of feel good about themselves. And so much of this, the psychology behind a lot of these apps seems to be based on this idea that, like, it’s not even about any objective notion of crime. It’s about perceptions of crime and managing those perceptions. And of course there’s, which we talked about, there’s kind of a feedback loop here. Like you said, one of the stubborn things for criminal reform experts and activists is that crime keeps going down, but perceptions of crime either stay the same or even go up slightly. One of the reasons, I assume, is because of these, of not just of course the torrent of media, but also I think the rise of these snitch apps as well.

Sarah Lustbader: Well, and I also think that you raised a good point, which is that it’s not just that it’s not a crime, it’s that people now feel like their worst impulses are being not only validated but encouraged. So, if you are afraid by someone merely existing who has certain attributes that you have been taught to fear, maybe there’s a part of you that says, ‘You know, I really shouldn’t indulge this part of me.’ And then you have these apps that are sort of drawing it out and saying ‘No, no, no, you should.’ And so one of the, when I was researching this, one of the more disturbing posts that I saw, I remember it was pretty simple. It was just, like, ‘There’s a woman with a shopping cart in my neighborhood.’ The implication being, ‘The only people that would have a shopping cart are people who don’t belong here and their existence is somehow threatening to me, and now I feel like I can say something about that. We ought to do something, you know, about this.’

Nima: Well, right. And I think you, you know, see this playing out with all of these videos posted recently about even, you know, guys working for UPS who are black and now people, like, coming out of their homes and saying, ‘What are you doing here? We’ve heard there are people lurking around the neighborhood wearing UPS uniforms,’ you know, and, like, (laughs) yeah. And so just again, the uh, perception of fear that then doubles back on itself and actually creates, uh, circumstances in which real harm can be done.

Sarah Lustbader: And especially when you talk about doubling back down, it’s not only the, the internal sort of validation of the worst impulses, the worst, most discriminatory, prejudicial impulses. But I think you also have a feedback loop when it comes to the police because police have long been shifting toward, you know, what they call data-driven policing, which sounds very technical and accurate, but is only as good as the data itself. And so when the data that’s sort of generated from these apps and these perceptions becomes part of the crime data that they’re using for predictive policing algorithms, then all you’re doing is ensuring that there’s nothing scientific about predictive policing or policing in general.

Adam: Let’s talk about the perverse incentives at the heart of this, which is that, as many authors, including yourself and writers have noted, is that Amazon has a partnership with the police in over 700 jurisdictions now where the police sort of double as pitchmen for the service, and there’s a mutually beneficial relationship. The police get a monitoring system, police in general throughout history, no matter the country, like to monitor more people when given the option, especially when it’s corporate subsidized. But then there’s the other side of the equation, which is Amazon’s entire business model is predicated on reducing shrinkage of packages, and the police are basically a state-subsidized security system for them. Can we talk about the ways in which fear is sort of built into this perverse business relationship between police and the corporate America and how that fear almost becomes the primary animating growth factor here?

Nima: “Beware porch pirates!”

Adam: Oh, yeah. Well we have a torrent of “porch pirate” stories, which we we won’t bore you with, but it’s the story that they write about 80,000 times a year.

Sarah Lustbader: Yeah, I mean the idea that the incentives are perverse sort of assumes that you care about mass incarceration and discrimination. I think that the incentives are not at all perverse for the two actors that you’re talking about.

Adam: Well, right, yeah. I meant for moral agents, I should say.

Sarah Lustbader: For people who care about good things.

Nima: For the Citations Needed main audience. (Laughs.)

Adam: For people with souls.

Sarah Lustbader: For you guys out there, of course it’s perverse, but I do think that it’s important to see how much of a win-win this is for Amazon and for all the companies that are making and assisting the police and for the police themselves. I mean this is, like, what the kids would call, like, a self-own, I think, where you’re just getting people to just rat on themselves. And yes, Amazon is interested, of course, in shrinking the porch pirates, but I think they’re more interested in becoming an essential part of everybody’s home. So I think, you know, I saw a parallel between this and when Axon taser began supplying various police departments with body cameras for free. You know, it wasn’t because they’re benevolent. The cameras were, you know, I guess they took a hit there, but what they really wanted was the data storage. That was where the money was.

Adam: They also knew the future of policing was to use these body cameras as real-time monitoring systems, which is of course what ended up happening.

Sarah Lustbader: Yeah. And so they become essential. Right? And so I think that that’s what we all should be very wary of, which is that these private corporations are really making out well, I think. They’re becoming essential both to consumers but also to these police departments. And I think one of the articles that you were referencing was saying that Amazon had, I guess admitted that the police who download videos that are captured by the Ring doorbell cameras, these police can keep them forever and share them with anyone they want without providing evidence of a crime.

Adam: Yeah, it does not require a warrant. That’s important to note that the Ring systems that the police hook up with, yeah, they don’t require a warrant.

Sarah Lustbader: They don’t, as far as I know, let the police just access the videos willy nilly. I think they do have to ask people for permission.

Adam: Yeah, you ask them ahead of time and then I’m pretty sure that most people are sort of, yeah, whatever. And then later on they realize that.

Nima: Well, right, because it gets back to this idea of the self-own. I mean, Sarah, as you were saying, like, obviously if the police came to your front door and were, like, ‘We want to install a camera in your house, we want one also on your front porch and your back deck.’ Most people would be, like, I don’t know, maybe skeptical about that. But this is all laundered, garnering, like, the same exact result, just through private companies, and one that you have now chosen yourself as a consumer as a way to protect your family and your property and your possessions, and somehow because you do it yourself and then grant license to police to view it and use it, somehow that makes it okay, or that makes it less super surreal when you’re just a part of creating the panopticon as opposed to just being the one surveilled only. Right?

Sarah Lustbader: I think that the individual versus the collective is an interesting point too, because most of these cameras, although I know not all of them are actually pointing outward, and I think people are probably considering, ‘Okay, well it’s not really capturing me,’ right? ‘It’s capturing everyone else ‘cause I’ll be looking outward and so I’ll give permission’ or something because of that. But I think what’s less understood is that there’s a collective outcome that’s happening, which is it’s creating a panopticon so then you become part of it. I mean, not to mention the fact that whatever happens on your porch could be completely private and something you wouldn’t want police or anyone else to know about. Certainly not to, like, upload onto a site that all your neighbors see.

Nima: Yeah, forever.

Sarah Lustbader: Yeah. Forever. But even still, I think it does get to a particular individual-versus-collective strategic failure when it comes to interactions with law enforcement, people don’t really see the bigger implications of their particular actions.

Adam: Well, right. Because they’re the sort of central hero in their story, which is to protect their family because they’ve, you know, they’ve seen Taken too many times.

Sarah Lustbader: How many times can you see Taken?

Adam: You can never see Taken too many times.

Nima: (Laughs.)

Sarah Lustbader: (Laughs.)

Adam: The premise of every third movie. Right? The sort of the home invader, even though it happens once every million years. Stranger Danger in general fuels a lot of this stuff.

Sarah Lustbader: Yes.

Nima: Because I think, you know, something that is often missed in this individual-versus-collective is that when your camera is facing out, you are not on it, but when your neighbor’s camera is facing out, you are on it. So it just creates like a, you know, constant kind of Rear Window-ism the cops then just have access to and these huge corporations just keep making money on.

Sarah Lustbader: Yeah.

Nima: Can you tell us about maybe some of the movements that are working toward fighting some of this, or ways that people can be sort of aware of what’s going on and maybe not feed into this themselves?

Sarah Lustbader: I think that a general skepticism of police and also these public-private partnerships is really important. When you have a company that you might have a nice positive association with, like, I don’t know, probably your listeners don’t have a positive association with Amazon, but plenty of people do. And you think, ‘Okay, well I trust them’ in the way that you were saying before and ‘I feel dubious of the police maybe, but Amazon, you know, like, they get me my kid’s diapers on time.’ It’s always suspect when anyone’s giving you anything for free.

Adam: Well, yeah, that should be your first hint.

Sarah Lustbader: There’s a Stranger Danger that’s real.

Nima: We will leave it there. Sarah Lustbader, senior legal counsel at The Justice Collaborative, previously senior program associate at the Vera Institute of Justice and a criminal defense attorney at the Bronx Defenders. Sarah, thank you so much for joining us today on Citations Needed.

Sarah Lustbader: It was great. Thank you.

[Music]

Adam: I’m glad she touched on the SketchFactor part. It was a short-lived app idea, but the interviews with the creators were—if you ever get a chance to read them—they’re just completely, they’re a love letter to like unchecked privilege.

Nima: They’re so tone deaf.

Adam: Yeah they’re like, ‘I don’t get the problem. Like it’s not going to be racist cause people are not going to be racist.’ And I’m like, okay, well, it’s called Sketch and it’s about people’s perceptions of crime, which are notoriously informed by, believe it or not, or not based on empirical data, um, that they, that they gather.

Nima: That they’ve been studying, gathering for just this purpose.

Adam: Yeah, the spectacle—in a library, like one of those research montages in a John Grisham movie. No, that was not how they arrived at their perceptions of crime, believe it or not, you fucking idiot.

Nima: To further discuss the effect of these apps on many local communities, we’re going to be joined by Steven Renderos, co-director of MediaJustice, a national racial justice hub fighting for racial, economic and gender justice in the digital age. Steven will join us in just a moment. Stay with us.

[Music]

Nima: We are joined now by Steven Renderos. Steven, thank you so much for joining us today on Citations Needed.

Steven Renderos: Thanks so much for having me, Nima.

Adam: Thank you so much for joining us. So when we were researching this, everywhere we looked, you provide a great perspective and we’re happy to have you on to kind of expound on that because I do think these things are very expoundable, if that’s a word. I don’t think it is. So you were asked by Vox’s ReCode about the, sort of, rise of the snitch apps and you said, quote, “These apps are not the definitive guides to crime in a neighborhood — it is merely a reflection of people’s own bias, which criminalizes people of color, the unhoused, and other marginalized communities.” One of the things we’re trying to sort of pin down for this show is what our notion of crime is and that it’s not some sort of objective thing. That it’s actually a social creation for the most part and that social creation is based on biases and assumptions. And you actually touched on the unhoused, which we didn’t actually spend a lot of time going over, so I’m glad you did. And who’s in power and who’s sort of determining the crimes. Can we talk about the disconnect first off between what people perceive as crime and what the sort of objective reality of quote-unquote “crime” is and how racism plays into that?

Steven Renderos: For sure. I mean you can look at, for starters, what crime statistics are telling us and what the story of those crime statistics has been over the past couple decades. Crime trends tend to be going down, every major crime category, be it violent offenses, robberies, drug-related crimes. Most of those things tend to be decreasing over the past couple decades, but yet when you look at studies that have been done of people in their communities, when asked the question, like, ‘Is crime going down or is it going up?’ The perception of crime seems to be consistently that it’s going up and not so much, not in line with what’s actually true, which is that crime is going down. Then on top of that, I think going back to the quote that you mentioned, for me the people’s own personal biases thing is the thing that stands out the most when talking about crime because ultimately what crime is really a reflection of is arrests. It’s the human-based decision to arrest somebody based on some perceived failure of a law. And so when you look at crime statistics, arrest records, like overall, we find that, like, close to five million people get arrested every single year. Of those five million, one in four of those people will get rearrested within that same year. And if you look at the reasons for why people are going in, the vast majority are nonviolent crimes. If you look at news, if you look at the content that we’re being distributed through neighborhood-based apps like Neighbors or Nextdoor, you would think that, like, violent crime is like on the rise and it’s so pervasive, but the reality is it’s just not true. So what’s the purpose behind that? Why is it such a consistent narrative that seems to seep into our minds and that seems to be the popular idea? You know, there’s a lot of interests behind crime being a thing that is on the public consciousness, whether it’s for police budgets or to help sell the latest police tech or for real-estate agents or for some other reason, like, there are some moneyed interest in all of this that are definitely fueling the perception that crime is a thing.

Nima: Yeah, I mean when we talk about tech and the rise of big tech, but also in terms of AI and algorithms that now give, you know, all this information, collect all this data as well, I think what we see all too often, maybe all the time, ubiquitously, is that we really deal with garbage in, garbage out, right? So that science and tech is actually not devoid of human interest and human bias. And so the inputs into these allegedly crime-fighting apps, the 911 calls, the self-reporting, the police reports, the then media reporting about those reports, about those arrests. We just see this collection of even more racist data, which then becomes the basis of what we are told is just objective data. And yet the focus remains, you know, as we talk about definitions of crime, the focus always remains on what is lurking in the streets as opposed to what is actually going on in boardrooms. And so it’s, there’s this, you know, consistent perception that crime is what happens outside your front door. Meanwhile, so much criminal activity is completely missed.

Steven Renderos: Yeah. You know, it’s interesting, there was this graphic, it was one of those kind of, like, moving graphics that shows you the variances in different kinds of statistics over a period of time. And it was talking about the cause of death in the United States, and it had things like shark attacks and terrorist attacks. And the bar graph kept going higher and higher and higher until it got to cancer, which was ridiculously high in comparison to other things that folks might think, like, you know, terrorist attacks for example, or car accidents. And it makes me think about just if that is the reality, what should be true about how we are investing in solutions geared towards those causes of death. And I’m sure if we looked at the data, how much money are we spending and investing in anti-terrorist policing activities and technology versus cancer research? So there is that kind of difference in perception and reality. And I think about that also with police technology that’s being sold to our communities. There is a difference between the promise of the technology versus, in reality, what it’s actually doing. Ring doorbells for example, the promise there is that you are helping to keep your community safe. The reality is you have these law enforcement agreements between Amazon Ring that allows them to pull your video content at any given point and hold onto that video content for as long as they want and share it with any third party vendor that they want. The reality of keeping your community safe, it’s actually just not true there because the police actually gets to wield an immense amount of power over what they get to do with that data. The same is true with like Axon themselves, one of the largest manufacturers of body worn cameras in the United States. The promise there is these devices will increase police transparency and accountability, but in reality, it only helped to, like, really entrench the power of police in our communities. So there is this, I think, this lack of, this really big gap between what the promise of technology is versus the reality of what it’s delivering for our communities.

Adam: I want to talk about gentrification, which to me seems like one of the primary movers here—I don’t think the only, but I think one of the major motivations here. We cited several studies earlier in the show, and there’s actually been quite a bit of research over the past year about this after it being kind of a black hole for a while. I think people are really looking into this, which is the relationship between over-policing and gentrification and the ways in which over-policing oftentimes precedes and correlates with quote-unquote “development” of poor neighborhoods. It would logically follow then, and we’ve shown on the show before how, for example, Block Club Chicago’s crime reporting, if you sort of mapped it on a map of Chicago and you mapped the places where gentrification was the most prevalent, it would be almost one-to-one. And I want to sort of talk about how these snitch apps make that worse. Make the idea that like the sort of proverbial white homeowner who are, you know, usually younger, more tech savvy are more likely to be on the vanguard of gentrification and less likely to be say, for example, in a, in a sort of plantation manor out in the middle of the suburbs. I want to talk about that and to the extent to which, and I know that there’s not a ton of data on this, but the extent to which in your work you suspect that these kinds of, the snitch app and snitch culture make that phenomenon worse.